Your cart is empty

Generative AI companies are growing at an incredible pace. From intelligent chatbots to advanced coding assistants, modern AI systems now process billions of parameters and massive amounts of data every day.

Companies like Anthropic are helping drive this transformation by developing advanced large language models designed to perform complex reasoning and natural conversations. But behind every powerful AI model is something even more important: deep learning infrastructure.

Without modern AI infrastructure, even the most advanced AI models would struggle to train, scale, or deliver reliable responses to users.

For CTOs, AI engineers, and enterprise leaders, understanding how deep learning infrastructure works is becoming essential. It is no longer just a backend IT topic, it is the foundation of competitive AI innovation.

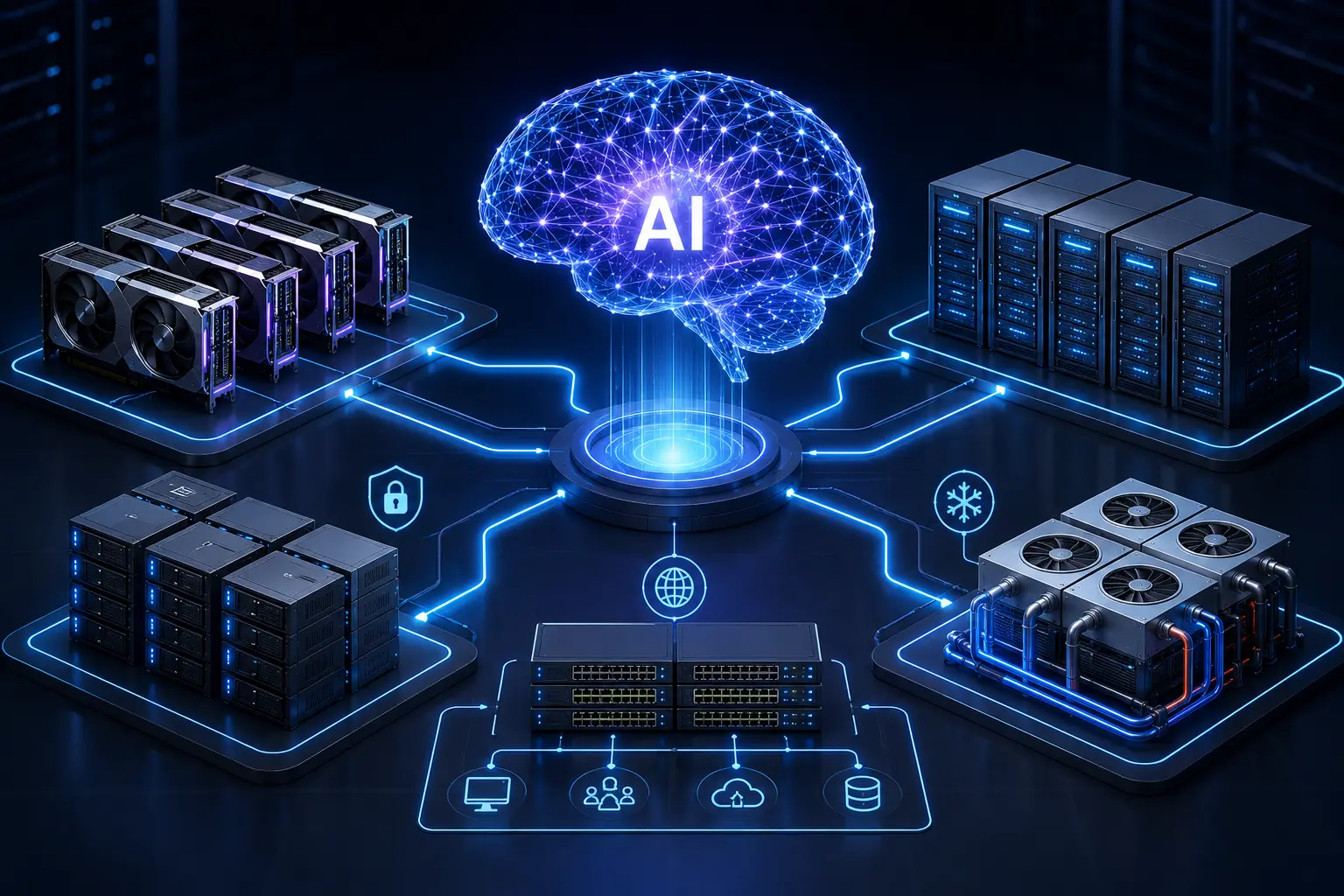

Deep learning infrastructure refers to the hardware, networking, storage, and software systems required to train and run modern AI models.

Think of it as the “industrial foundation” behind artificial intelligence.

If an AI model is the brain, the infrastructure is everything that keeps the brain functioning:

GPUs provide computational power

AI servers coordinate workloads

Networking moves data between systems

Storage platforms feed training datasets

Cooling and power systems keep everything stable

Modern AI companies depend on highly optimized AI compute infrastructure because training large models requires enormous processing capability.

Large AI models can contain billions or even trillions of parameters. Training them involves processing huge datasets repeatedly across thousands of GPU cores.

Training becomes too slow

Costs increase dramatically

Inference performance suffers

System reliability decreases

This is why advanced enterprise AI systems are designed around performance, scalability, and uptime.

Modern AI companies operate at a scale far beyond traditional software businesses.

Training a large language model is similar to building a massive digital factory. Every component must work together continuously and efficiently.

Training modern AI models requires thousands of GPUs working simultaneously.

These GPUs process mathematical operations in parallel, dramatically accelerating deep learning tasks compared to traditional CPUs.

AI training datasets are enormous. High-speed networking is critical for moving data between GPU clusters without delays.

Even small bottlenecks can slow down training significantly.

After training, AI models must respond quickly to user requests.

Low-latency infrastructure ensures users receive fast, accurate outputs, whether they are asking questions, generating code, or analyzing documents.

GPU clusters are one of the most important components of modern deep learning infrastructure.

A GPU cluster is a group of interconnected graphics processing units working together as a unified compute system.

Instead of relying on a single machine, AI workloads are distributed across many GPUs.

Imagine trying to move an entire library by yourself.

It would take weeks.

Now imagine 1,000 people moving books together simultaneously. The process becomes dramatically faster.

That is essentially how GPU clusters accelerate large language model training.

Faster Training

Parallel processing allows AI models to train in days or weeks instead of months.

Better Scalability

Infrastructure can expand as AI workloads grow.

Higher Reliability

Distributed systems reduce the risk of single points of failure.

Efficient Resource Allocation

AI teams can dynamically allocate compute resources based on workload demands.

Modern AI systems rely on multiple infrastructure layers working together seamlessly.

GPUs are the computational engine behind AI training and inference.

AI servers house these GPUs and provide:

High memory bandwidth

Multi-GPU coordination

Optimized AI workloads

Enterprise reliability

Modern AI infrastructure often includes rack-scale GPU deployments designed specifically for machine learning operations.

AI workloads require constant communication between servers and GPUs.

Low-latency networking technologies help:

Synchronize distributed training

Reduce communication delays

Improve training efficiency

Support real-time inference

Without fast networking, even powerful GPUs can become underutilized.

AI models rely on massive datasets.

Storage infrastructure must deliver:

High throughput

Fast data access

Reliable redundancy

Scalable capacity

For modern AI companies, storage performance directly impacts model training speed.

AI infrastructure consumes enormous amounts of electricity.

High-density GPU environments generate significant heat, making advanced cooling systems essential.

Modern data centers increasingly rely on:

Liquid cooling

Precision airflow management

Redundant power systems

Energy-efficient infrastructure design

Cooling is no longer just a facilities concern, it is now a core AI scalability requirement.

Scalable infrastructure enables AI organizations to innovate faster while maintaining operational reliability.

More compute power allows teams to:

Train larger models

Experiment more frequently

Reduce development timelines

Accelerate research iterations

This creates a major competitive advantage in the fast-moving AI industry.

Inference infrastructure determines how quickly users receive outputs.

Efficient AI compute infrastructure improves:

User experience

API responsiveness

Real-time AI applications

Enterprise deployment performance

Enterprise AI applications require continuous availability.

Infrastructure resilience helps ensure:

Stable performance

Redundant failover systems

Reduced downtime

Consistent service delivery

For AI companies serving global users, uptime is critical.

Building AI infrastructure at scale introduces several operational challenges.

GPU clusters consume significantly more power than traditional servers.

This increases demands on:

Electrical systems

Rack design

Facility engineering

Energy efficiency planning

Cooling Complexity

As GPU density rises, cooling becomes increasingly difficult.

Traditional air cooling may struggle to handle modern AI workloads efficiently.

This is why advanced cooling strategies are becoming standard in enterprise AI environments.

Global demand for AI GPUs continues to grow rapidly.

Organizations often face challenges involving:

Hardware procurement

Capacity planning

Deployment timelines

Cost management

AI workloads evolve quickly.

Infrastructure must scale without causing operational disruption or performance bottlenecks.

Flexible, modular infrastructure design is becoming essential for long-term AI growth.

As organizations expand their AI initiatives, infrastructure decisions become increasingly strategic.

Exeton Computer Network & Infrastructure Installation & Maintenance L.L.C S.O.C helps enterprises build scalable AI environments through solutions designed for modern deep learning workloads, including:

GPU infrastructure deployments

Enterprise AI servers

High-performance data center solutions

Scalable AI compute environments

SLA-backed infrastructure support

For businesses deploying advanced AI applications, infrastructure reliability and scalability can directly influence operational success.

Deep learning infrastructure includes the hardware and systems required to train and run AI models, such as GPUs, servers, networking, storage, and cooling systems.

GPU clusters allow AI workloads to run in parallel, dramatically improving training speed, scalability, and performance for large AI models.

Common challenges include power consumption, cooling requirements, GPU availability, infrastructure scaling, and maintaining reliable uptime.

Modern AI infrastructure enables faster data processing, distributed computing, and efficient resource utilization, helping reduce training times significantly.

Enterprise AI systems must handle large workloads reliably while delivering fast response times, scalability, and high availability.

As AI adoption accelerates, organizations need infrastructure designed specifically for modern deep learning workloads.

Exeton Computer Network & Infrastructure Installation & Maintenance L.L.C S.O.C delivers enterprise-grade AI infrastructure solutions that help businesses scale AI operations with performance, reliability, and long-term flexibility in mind.